paper · 2026

트랜스포머 XAI를 위한 QKV 분해

가중치만으로 트랜스포머 예측 실패를 진단하고, 한 레이어 재학습으로 교정. GPT-2 수도 정확도 2/8 → 8/8, 부작용 0, V-only Wv 슬라이스(590K params)로도 가능.

What this paper does

We present a method for diagnosing transformer prediction errors and surgically correcting them through Q/K/V weight analysis. By decomposing attention head weights into query functions, key responses, and value channels, failure causes can be identified without running any input through the model.

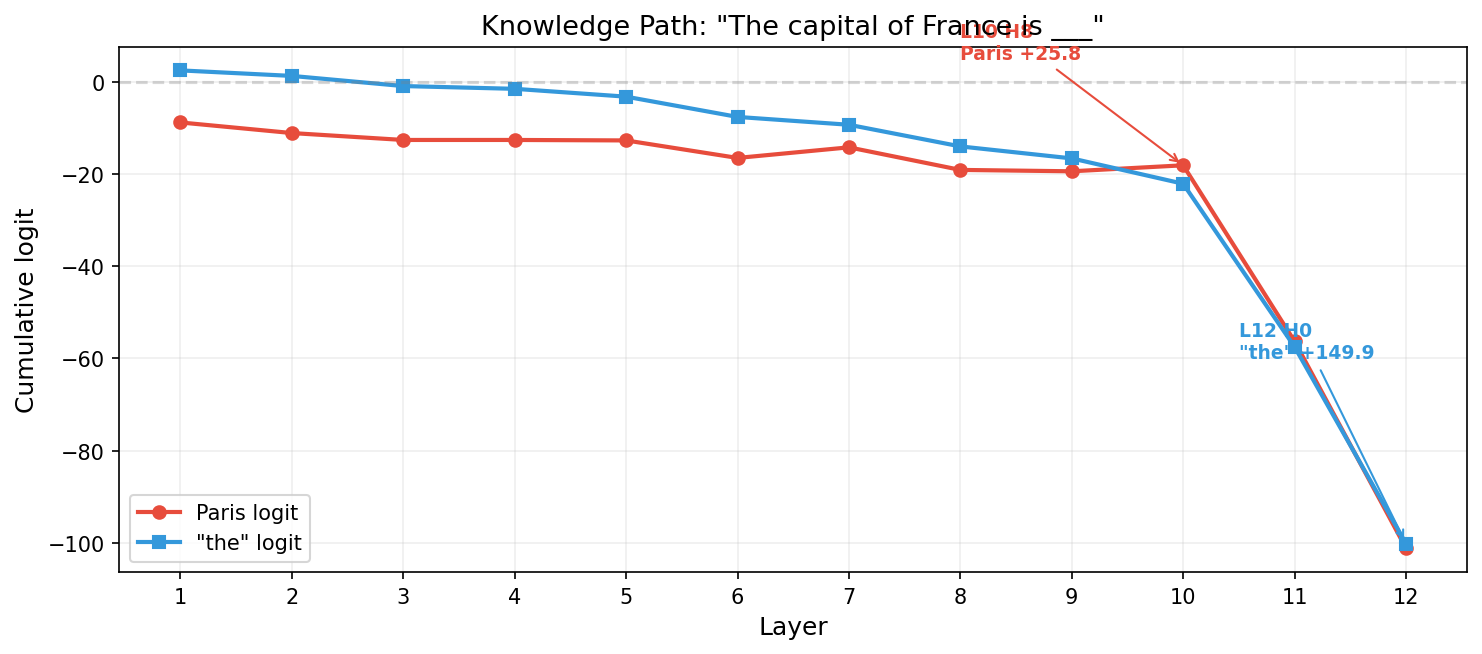

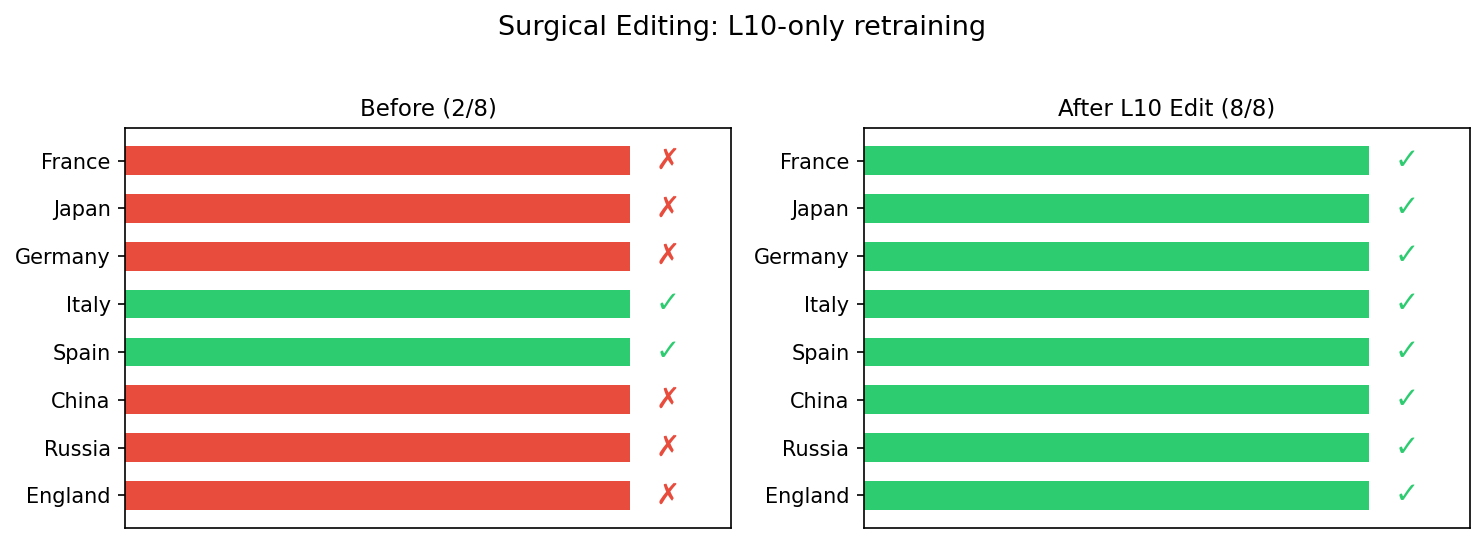

Applied to GPT-2: factual knowledge (e.g., France→Paris) emerges at layer 10 head 8 (+25.8 logit contribution) and is subsequently reversed at layer 12 head 0 (+149.9 for “the”). Targeted retraining of only the diagnosed layer recovers knowledge accuracy from 2/8 to 8/8 capitals with zero side effects (general capability 11/15 maintained, PPL 42.7 → 42.6).

The model already possesses this knowledge internally; the failure is one of routing, not absence.

Why it matters

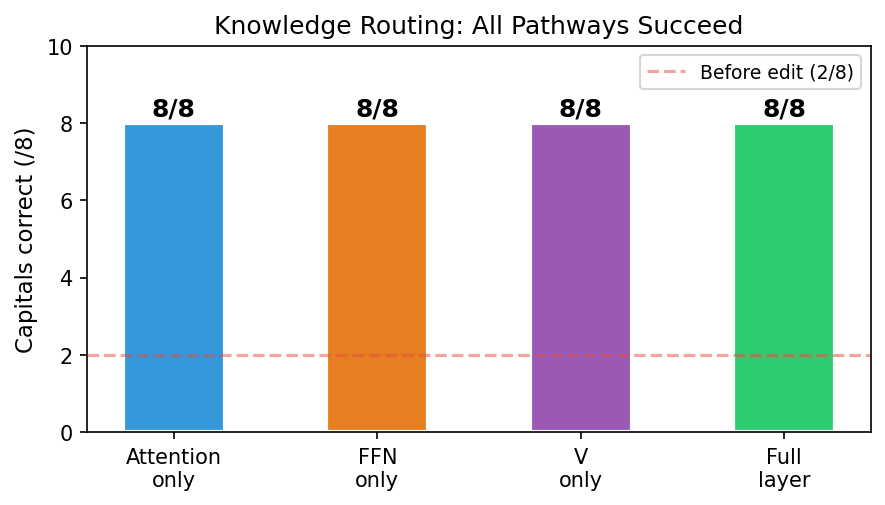

Routing correction can be achieved through attention V, FFN, or even V-only (Wv slice, 590K parameters — 0.5% of GPT-2). Knowledge routing is not confined to FFN layers, contrary to the common interpretation behind ROME and similar methods.

This opens correction pathways beyond MLP-only model editing.

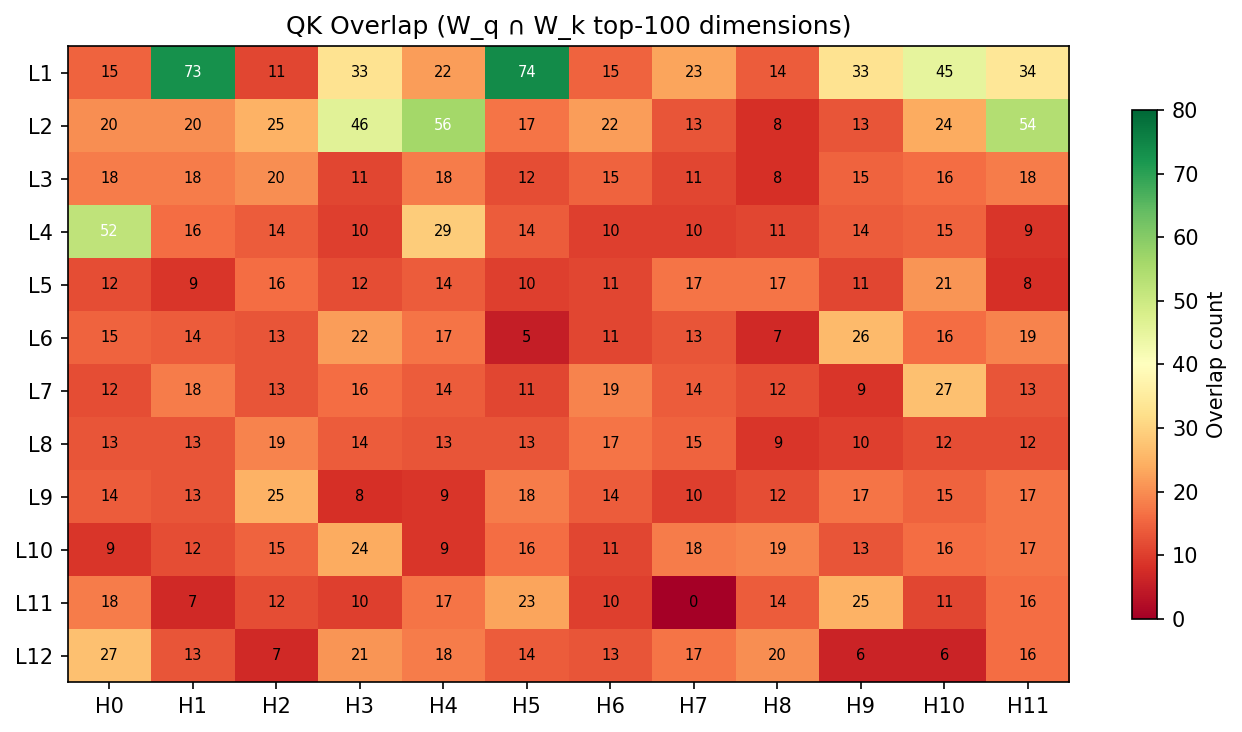

Static diagnostics (input-free)

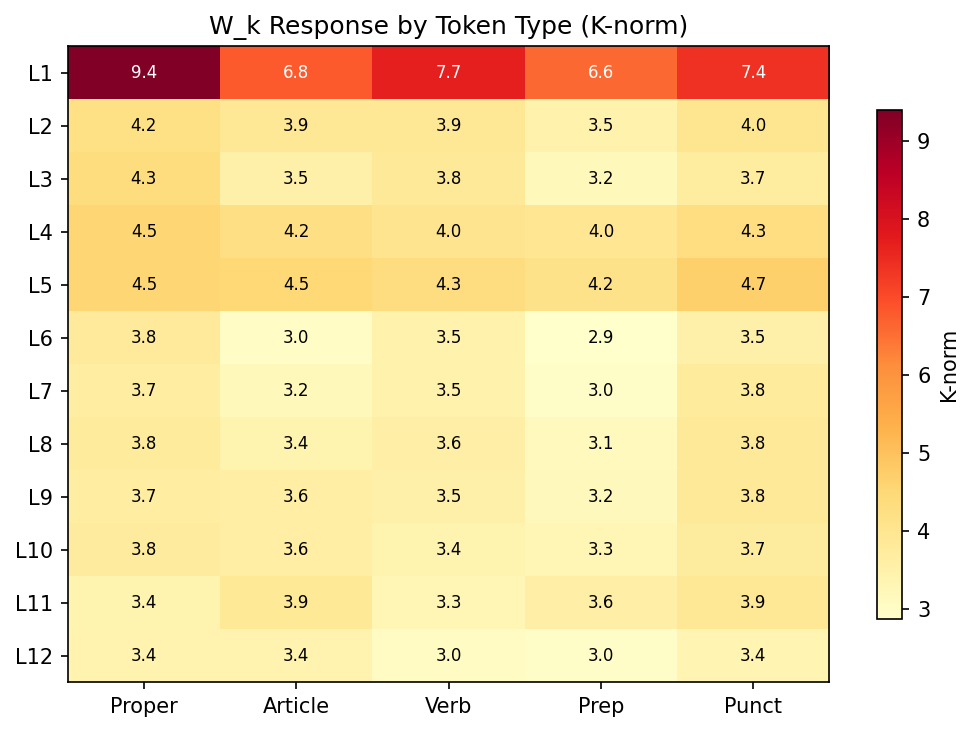

The method also classifies all 144 attention heads in GPT-2 small directly from weights — no input pass required:

Cross-validation

- Captum (gradient × activation): top-1 neuron agreement (n=2440)

- TransformerLens logit lens: same routing layer identified

- Activation patching: confirms causal involvement (-0.44 logit when neuron zeroed)

Verify

- Zenodo — paper PDF + permanent DOI for citation.

- HuggingFace dashboard — corrected GPT-2 L10 weights, QKV analysis scripts, interactive dashboard, all figures. Anyone can re-run the 8 capital-city prompts and check.

arXiv preprint: forthcoming.